Cut IT costs without reducing capacity

Your company could save millions in IT costs every year by using a resource-driven IT capacity model versus architecture-driven.

Most companies buy IT capacity to support a given application, and the amount of IT capacity purchased is specified by the application team putting the application live. The number of servers is defined according to the architecture of the application, with web servers, application servers (Java machines), database servers and all supporting and connecting services having their own server space. This is architecture-driven IT capacity.

Too many servers

Prior to the “virtualization” of physical servers, this approach equated to discrete boxes with their own function in the application. To prevent “noisy neighbors” – the software being impacted by other software running on the server – the servers were dedicated to the task. Even with virtualization, servers tend to be dedicated, even though they are now running on shared hardware.

This approach is inefficient on IT resource and therefore more expensive than it should be. To ensure that the application performance isn’t compromised, the servers specified are always oversized - and to prevent impact from other software, it is often dedicated. The result is that companies end up with significantly more servers than they need to run the business, and most servers they do have are running below 20% utilization - even at rare peaks.

Almost all IT estates that we analyzed were very lightly loaded and usually had more than 10% of servers which never showed any workload. These servers were often installed and forgotten – orphans in the process.

Wasted cost

Industry cost estimates for running these servers can vary. But if we include the operation system license, the software installed, the storage, backup & restore, the data-center space, power and cooling, and most importantly the system administrator costs to keep them up to date, my best estimate is $7,000 per year to run a server.

"In an IT estate of 20,000 servers, it is not unusual to find 3,000 servers-worth

of unused and easily consolidated servers.

This equates to more than $20 million a year of wasted cost."

Is there a better way?

A resource-driven approach is the answer. The architecture-driven approach outlined above is taken because the teams defining the requirements are not responsible for what is running already - or only for the servers which their team are using. No one has the data to control IT resources centrally, so a resource-driven IT capacity approach isn’t possible or attempted. Where a capacity team does exist, it is often used for regulatory compliance, to report on the headroom that important applications have.

However, tools do exist which can capture the utilization of all the resources on all the servers in a very large estate.

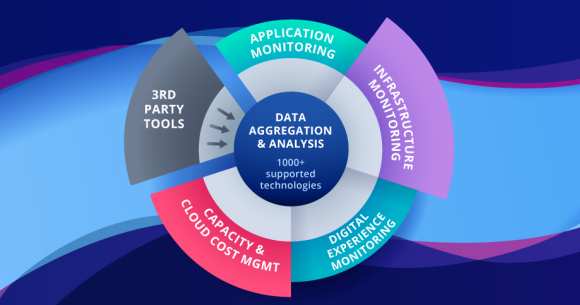

ITRS Capacity Planner

With tools like ITRS Capacity Planner, it is possible for the IT infrastructure team to see how the estate uses its resources with finely tuned granularity, so that rare peak loads are captured. With this tool it is possible to rationalize an existing estate, identifying orphan servers first and then re-using them for new demands as they come in. The low-usage servers can be reviewed to define what other workloads could be moved to that server.

Slowly, across a large estate it is possible to redefine the architecture of the applications to make a more optimal use of the servers already purchased. This requires a change in the way new applications are requested. Instead of expecting discrete servers to support an application, the application team requests resources and resource types. Where dedicated resources are needed, they should be specified, and segregation of the workload can be provided.

The IT capacity team within the IT infrastructure team can continually monitor the actual usage of the applications by the type of resource used and can refine the requirement by application based on the actual usage.

“We are moving to the cloud”

Moving to public cloud providers increases the need for resource-driven IT capacity. While it is inefficient to buy and run too much hardware in your own data center, it is significantly more expensive to buy capacity in the public clouds which you don’t use, or you pay for when it is not needed. Instances purchased from cloud providers typically double in price for each increment of instance size you buy. So, accidentally specifying a server instance that is one size too big means you pay double what you should pay.

ITRS Capacity Planner can do the same analysis of instances running in the public cloud. Using the usage data captured from your actual instances, Capacity Planner can right-size your instances. Then using the data about duration of running and the reserved capacity already purchased, we can right-buy for you.

We also support the recommendations for moving an on-premises applications to the cloud, ensuring right-buying and right-sizing before the application is moved to the cloud.

You can learn more about ITRS Capacity Planner by clicking below.